Your AI app

remembers the chat,

forgets the person.

Orbit fixes that.

Orbit is memory for chatbots, copilots, and agent products. Ingest events, retrieve sharp context, send feedback. Orbit learns what matters, trims the noise, and keeps your prompt on speaking terms with the context window.

AI memory is still

a three-ring circus.

Building memory for an AI app still means wiring multiple data systems, inventing ranking rules, and hoping quality does not decay in month two. You spend the sprint on plumbing before your product does anything useful.

Memory Is Fragmented

Vector index, relational tables, cache, embedding provider. Different contracts, different failure modes, and no single source of truth.

vector_db.store(everything) redis.cache(maybe_relevant) postgres.dump(just_in_case) config.yaml # now with extra drama

Importance Is Hand-Waved

Hardcoded ranking weights age quickly. They do not learn from outcomes, and they definitely do not read your postmortems.

importance = 0.9 if recent else (0.7 if similar else 0.5) # These values came from vibes

No Feedback Loop

Most stacks cannot answer: did this memory help the response or sabotage it? Without a loop, quality plateaus.

# What do you actually retrieve # that helps? Nobody knows. feedback_loop = None improvement = 0

Retention Is Guesswork

Keep data for 30 days? 90? forever? Old context piles up and starts overriding current reality.

redis_client.setex(

f"recent_{user_id}",

86400, # because somebody picked it once

json.dumps(messages)

)Context Budget Burn

To be safe, teams stuff prompts with 20+ memory items. Most are irrelevant, but all of them cost tokens.

Recent messages: 10 Vector results: 5 User history: 5 Total: 20 items + noise # Great for latency charts, not answers

Quality Drifts Down

As data grows, personalization often regresses. Old truths compete with new behavior, and the assistant starts sounding out of date.

Month 1: 70% satisfaction Month 2: 69% satisfaction Month 3: 68% satisfaction # More data, less clarity

Memory plumbing

to memory product.

Orbit replaces a stitched-together memory stack with one contract. You send events, fetch context, and close the loop with feedback. Ranking, decay, inference, and retrieval tuning happen behind the API.

from fastapi import FastAPI

from sqlalchemy import create_engine

import pinecone

from redis import Redis

from openai import OpenAI

app = FastAPI()

db = create_engine("postgresql://...")

pinecone.init(api_key="pk-...")

redis_client = Redis(host="localhost", port=6379)

openai_client = OpenAI(api_key="sk-...")

class MemoryManager:

def __init__(self):

self.pinecone_index = pinecone.Index("coding-chatbot")

self.db = db

self.redis = redis_client

def store_interaction(self, user_id, message, response):

embedding = openai_client.Embedding.create(

input=message,

model="text-embedding-3-small"

)["data"][0]["embedding"]

self.pinecone_index.upsert(

vectors=[(f"msg_{user_id}_{timestamp}",

embedding, {"user_id": user_id})]

)

with self.db.connect() as conn:

conn.execute("INSERT INTO interactions ...")

redis_client.setex(

f"recent_{user_id}", 86400,

json.dumps([message, response])

)

def retrieve_context(self, user_id, message, limit=5):

cached = redis_client.get(f"recent_{user_id}")

embedding = openai_client.Embedding.create(...)

vector_results = self.pinecone_index.query(...)

with self.db.connect() as conn:

db_results = conn.execute(...)

return self._manually_rank_context(

vector_results, db_results, cached

)

def _manually_rank_context(self, vectors, db, recent):

# Hardcoded. Never learns.

ranked = []

for item in recent:

ranked.append({"importance": 0.9})

for result in vectors:

ranked.append({"importance": 0.7})

for result in db:

ranked.append({"importance": 0.5})

return sorted(ranked, key=lambda x: x["importance"])[:5]Coding tutor chatbot.

Same app, two memory systems.

This is where memory either helps your product grow up or keeps it stuck rerunning old conversations. Compare outcomes across day one, day thirty, and real production load.

Alice asks: 'What is a for loop?'

Orbit is not

just a vector wrapper.

It is a complete memory layer for developer products: ingestion, ranking, decay, personalization inference, and observability in one runtime.

Semantic Interpretation

Orbit stores meaning, not just tokens. Queries match intent and context, not brittle keyword overlap.

When a user asks the same thing three different ways, Orbit can still treat it as one recurring signal.

Outcome-Weighted Importance

Memory rank is adjusted by outcomes and feedback. Helpful memories move up; distracting ones cool off.

The system learns from production behavior instead of frozen constants tucked in a helper file.

Adaptive Decay

Old memories are not deleted blindly or kept forever. Orbit decays stale context while preserving durable facts.

Yesterday's confusion fades when a user improves. No manual cleanup cron theater required.

Diversity-Aware Retrieval

Orbit balances relevance with coverage so one long response does not crowd out profile facts and progress signals.

Top-k retrieval stays useful because results are ranked for utility, not just verbosity.

Inferred User Memory

Orbit can write inferred memories like learning patterns and style preferences when repeated evidence exists.

You are not limited to raw chat logs. The system builds compact user understanding over time.

Transparent Provenance

Retrieve responses include inference provenance metadata so you can see why a memory exists and what produced it.

When quality shifts, debugging is evidence-based, not detective fiction.

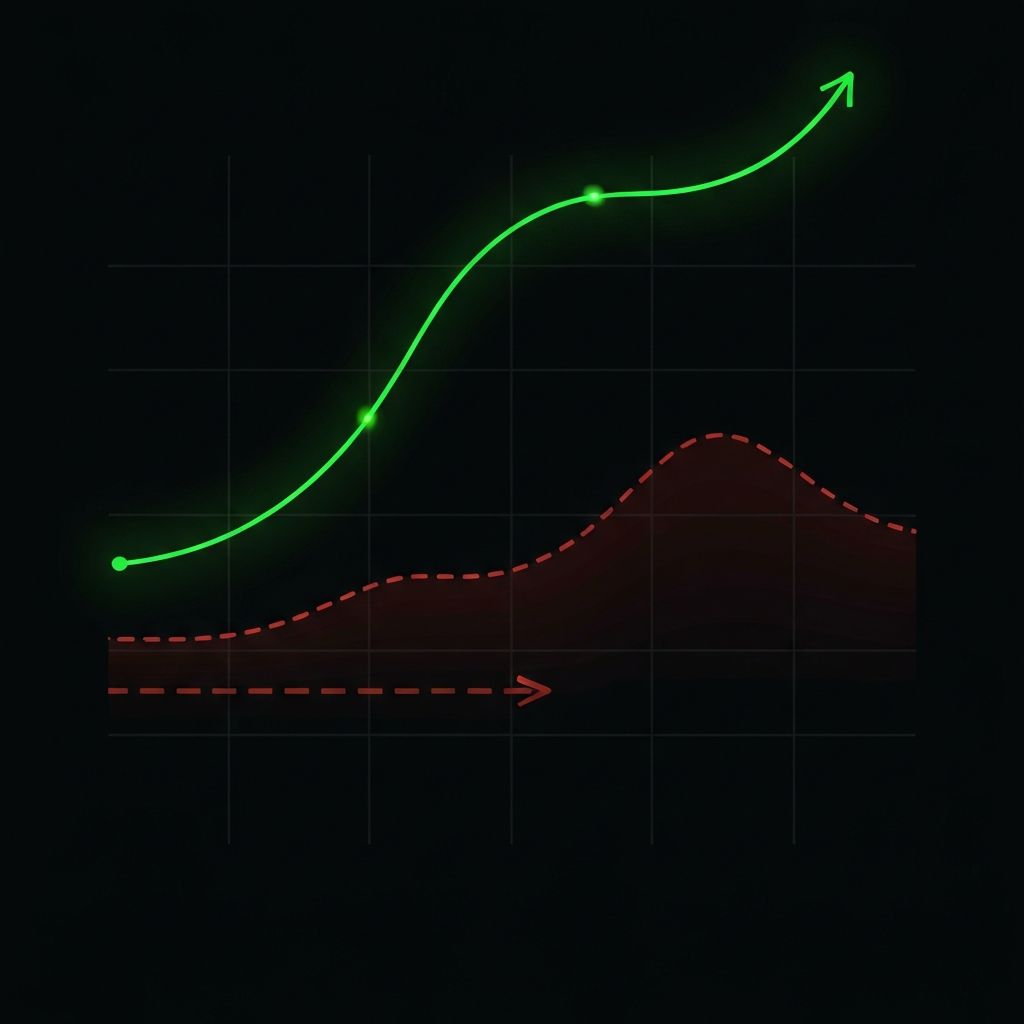

Memory quality

compounds.

In production, memory either gets noisier or gets smarter. Orbit is built so retrieval quality improves with feedback instead of degrading under data growth.

Same team.

Very different quarter.

"Let me wire vector search, SQL, and cache first..."

Most of the week goes to memory plumbing and ranking hacks.

Assistant launches, but personalization feels shallow.

"Let me integrate Orbit and ship the product flow."

Core memory loop is up quickly with ingest, retrieve, and feedback.

Assistant launches with focused, user-aware context.

"Why is the model suddenly quoting stale context?"

Team spends cycles tuning decay and fighting noisy retrieval.

Progress is possible, but slow and expensive.

"Retrieval quality trend is up. Nice."

Team focuses on product features while memory keeps learning.

Users notice the assistant adapting to their progress.

"We need a memory rewrite before the next release."

Scaling pressure lands on custom infra and brittle heuristics.

Roadmap slows because memory stack became its own project.

"Memory is boring now. Exactly what we wanted."

Runtime stays stable as traffic grows.

Team keeps shipping while personalization gets sharper.

Most effort goes to

memory maintenance.

Teams spend serious time babysitting relevance, retention, and ranking. The product moves, but slower than it should.

Most effort goes to

user experience.

Memory stays focused, adapts with feedback, and remains observable. Engineers spend time on product decisions instead of memory triage.

Memory should be a product advantage, not your side quest.

Ready to ship an AI app

that remembers people,

not just prompts?_

Orbit handles memory infrastructure so your team can focus on product behavior, response quality, and user outcomes.